The dexterity hole: from human hand to robotic hand

Observe your individual hand. As you learn this, it’s holding your telephone or clicking your mouse with seemingly easy grace. With over 20 levels of freedom, human arms possess extraordinary dexterity, which may grip a heavy hammer, rotate a screwdriver, or immediately alter when one thing slips.

With an analogous construction to human arms, dexterous robotic arms supply nice potential:

Common adaptability: Dealing with numerous objects from delicate needles to basketballs, adapting to every distinctive problem in actual time.

Advantageous manipulation: Executing advanced duties like key rotation, scissor use, and surgical procedures which are unattainable with easy grippers.

Ability switch: Their similarity to human arms makes them best for studying from huge human demonstration knowledge.

Regardless of this potential, most present robots nonetheless depend on easy “grippers” because of the difficulties of dexterous manipulation. The pliers-like grippers are succesful solely of repetitive duties in structured environments. This “dexterity hole” severely limits robots’ function in our each day lives.

Amongst all manipulation expertise, greedy stands as essentially the most basic. It’s the gateway by means of which many different capabilities emerge. With out dependable greedy, robots can not choose up instruments, manipulate objects, or carry out advanced duties. Subsequently, we give attention to equipping dexterous robots with the aptitude to robustly grasp various objects on this work.

The problem: why dexterous greedy stays elusive

Whereas people can grasp virtually any object with minimal aware effort, the trail to dexterous robotic greedy is fraught with basic challenges which have stymied researchers for many years:

Excessive-dimensional management complexity. With 20+ levels of freedom, dexterous arms current an astronomically giant management house. Every finger’s motion impacts your entire grasp, making it extraordinarily tough to find out optimum finger trajectories and power distributions in real-time. Which finger ought to transfer? How a lot power needs to be utilized? How you can alter in real-time? These seemingly easy questions reveal the extraordinary complexity of dexterous greedy.

Generalization throughout various object shapes. Completely different objects demand basically totally different grasp methods. For instance, spherical objects require enveloping grasps, whereas elongated objects want precision grips. The system should generalize throughout this huge range of shapes, sizes, and supplies with out specific programming for every class.

Form uncertainty below monocular imaginative and prescient. For sensible deployment in each day life, robots should depend on single-camera methods—essentially the most accessible and cost-effective sensing resolution. Moreover, we can not assume prior information of object meshes, CAD fashions, or detailed 3D data. This creates basic uncertainty: depth ambiguity, partial occlusions, and perspective distortions make it difficult to precisely understand object geometry and plan acceptable grasps.

Our strategy: RobustDexGrasp

To deal with these basic challenges, we current RobustDexGrasp, a novel framework that tackles every problem with focused options:

Instructor-student curriculum for high-dimensional management. We educated our system by means of a two-stage reinforcement studying course of: first, a “instructor” coverage learns best greedy methods with privileged data (full object form and tactile sensors) by means of in depth exploration in simulation. Then, a “scholar” coverage learns from the instructor utilizing solely real-world notion (single-view level cloud, noisy joint positions) and adapts to real-world disturbances.

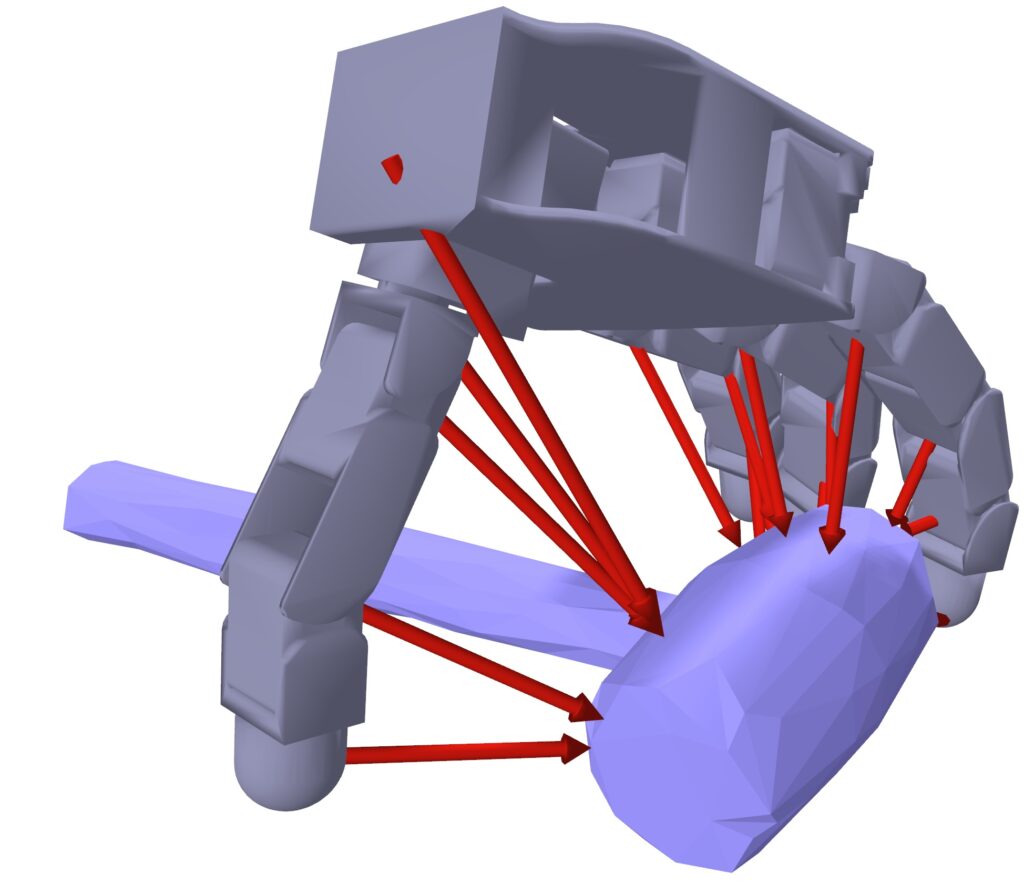

Hand-centric “instinct” for form generalization. As a substitute of capturing full 3D form options, our methodology creates a easy “psychological map” that solely solutions one query: “The place are the surfaces relative to my fingers proper now?” This intuitive strategy ignores irrelevant particulars (like colour or ornamental patterns) and focuses solely on what issues for the grasp. It’s the distinction between memorizing each element of a chair versus simply realizing the place to place your arms to carry it—one is environment friendly and adaptable, the opposite is unnecessarily difficult.

Multi-modal notion for uncertainty discount. As a substitute of counting on imaginative and prescient alone, we mix the digital camera’s view with the hand’s “physique consciousness” (proprioception—realizing the place its joints are) and reconstructed “contact sensation” to cross-check and confirm what it’s seeing. It’s like the way you would possibly squint at one thing unclear, then attain out to the touch it to make certain. This multi-sense strategy permits the robotic to deal with tough objects that may confuse vision-only methods—greedy a clear glass turns into doable as a result of the hand “is aware of” it’s there, even when the digital camera struggles to see it clearly.

The outcomes: from laboratory to actuality

Skilled on simply 35 simulated objects, our system demonstrates glorious real-world capabilities:

Generalization: It achieved a 94.6% success fee throughout a various take a look at set of 512 real-world objects, together with difficult gadgets like skinny containers, heavy instruments, clear bottles, and gentle toys.

Robustness: The robotic might keep a safe grip even when a major exterior power (equal to a 250g weight) was utilized to the grasped object, exhibiting far larger resilience than earlier state-of-the-art strategies.

Adaptation: When objects had been by chance bumped or slipped from its grasp, the coverage dynamically adjusted finger positions and forces in real-time to get better, showcasing a stage of closed-loop management beforehand tough to realize.

Past selecting issues up: enabling a brand new period of robotic manipulation

RobustDexGrasp represents an important step towards closing the dexterity hole between people and robots. By enabling robots to know almost any object with human-like reliability, we’re unlocking new prospects for robotic functions past greedy itself. We demonstrated how it may be seamlessly built-in with different AI modules to carry out advanced, long-horizon manipulation duties:

Greedy in litter: Utilizing an object segmentation mannequin to establish the goal object, our methodology allows the hand to choose a selected merchandise from a crowded pile regardless of interference from different objects.

Job-oriented greedy: With a imaginative and prescient language mannequin because the high-level planner and our methodology offering the low-level greedy talent, the robotic hand can execute grasps for particular duties, reminiscent of cleansing up the desk or enjoying chess with a human.

Dynamic interplay: Utilizing an object monitoring module, our methodology can efficiently management the robotic hand to know objects shifting on a conveyor belt.

Wanting forward, we purpose to beat present limitations, reminiscent of dealing with very small objects (which requires a smaller, extra anthropomorphic hand) and performing non-prehensile interactions like pushing. The journey to true robotic dexterity is ongoing, and we’re excited to be a part of it.

Learn the work in full

Hui Zhang

is a PhD candidate at ETH Zurich.