What are LLMs?

A Giant Language Mannequin (LLM) is a sophisticated AI system designed to carry out complicated pure language processing (NLP) duties like textual content era, summarization, translation, and extra. At its core, an LLM is constructed on a deep neural community structure often called a transformer, which excels at capturing the intricate patterns and relationships in language. A number of the well known LLMs embody ChatGPT by OpenAI, LLaMa by Meta, Claude by Anthropic, Mistral by Mistral AI, Gemini by Google and much more.

The Energy of LLMs in At present’s Era:

- Understanding Human Language: LLMs have the power to grasp complicated queries, analyze context, and reply in ways in which sound human-like and nuanced.

- Data Integration Throughout Domains: Resulting from coaching on huge, various knowledge sources, LLMs can present insights throughout fields from science to artistic writing.

- Adaptability and Creativity: One of the thrilling facets of LLMs is their adaptability. They’re able to producing tales, writing poetry, fixing puzzles, and even holding philosophical discussions.

Drawback-Fixing Potential: LLMs can deal with reasoning duties by figuring out patterns, making inferences, and fixing logical issues, demonstrating their functionality in supporting complicated, structured thought processes and decision-making.

For builders trying to streamline doc workflows utilizing AI, instruments just like the Nanonets PDF AI provide priceless integration choices. Coupled with Ministral’s capabilities, these can considerably improve duties like doc extraction, guaranteeing environment friendly knowledge dealing with. Moreover, instruments like Nanonets’ PDF Summarizer can additional automate processes by summarizing prolonged paperwork, aligning effectively with Ministral’s privacy-first purposes.

Automating Day-to-Day Duties with LLMs:

LLMs can rework the way in which we deal with on a regular basis duties, driving effectivity and releasing up priceless time. Listed below are some key purposes:

- Electronic mail Composition: Generate personalised electronic mail drafts shortly, saving time and sustaining skilled tone.

- Report Summarization: Condense prolonged paperwork and reviews into concise summaries, highlighting key factors for fast evaluate.

- Buyer Help Chatbots: Implement LLM-powered chatbots that may resolve frequent points, course of returns, and supply product suggestions based mostly on consumer inquiries.

- Content material Ideation: Help in brainstorming and producing artistic content material concepts for blogs, articles, or advertising and marketing campaigns.

- Information Evaluation: Automate the evaluation of knowledge units, producing insights and visualizations with out guide enter.

- Social Media Administration: Craft and schedule participating posts, work together with feedback, and analyze engagement metrics to refine content material technique.

- Language Translation: Present real-time translation companies to facilitate communication throughout language obstacles, best for world groups.

To additional improve the capabilities of LLMs, we will leverage Retrieval-Augmented Era (RAG). This method permits LLMs to entry and incorporate real-time data from exterior sources, enriching their responses with up-to-date, contextually related knowledge for extra knowledgeable decision-making and deeper insights.

One-Click on LLM Bash Helper

We are going to discover an thrilling option to make the most of LLMs by creating an actual time utility known as One-Click on LLM Bash Helper. This software makes use of a LLM to simplify bash terminal utilization. Simply describe what you need to do in plain language, and it’ll generate the right bash command for you immediately. Whether or not you are a newbie or an skilled consumer in search of fast options, this software saves time and removes the guesswork, making command-line duties extra accessible than ever!

The way it works:

- Open the Bash Terminal: Begin by opening your Linux terminal the place you need to execute the command.

- Describe the Command: Write a transparent and concise description of the duty you need to carry out within the terminal. For instance, “Create a file named abc.txt on this listing.”

- Choose the Textual content: Spotlight the duty description you simply wrote within the terminal to make sure it may be processed by the software.

- Press Set off Key: Hit the F6 key in your keyboard as default (will be modified as wanted). This triggers the method, the place the duty description is copied, processed by the software, and despatched to the LLM for command era.

- Get and Execute the Command: The LLM processes the outline, generates the corresponding Linux command, and pastes it into the terminal. The command is then executed routinely, and the outcomes are displayed so that you can see.

Construct On Your Personal

For the reason that One-Click on LLM Bash Helper can be interacting with textual content in a terminal of the system, it is important to run the applying domestically on the machine. This requirement arises from the necessity to entry the clipboard and seize key presses throughout totally different purposes, which isn’t supported in on-line environments like Google Colab or Kaggle.

To implement the One-Click on LLM Bash Helper, we’ll have to arrange just a few libraries and dependencies that may allow the performance outlined within the course of. It’s best to arrange a brand new setting after which set up the dependencies.

Steps to Create a New Conda Surroundings and Set up Dependencies

- Open your terminal

- Create a brand new Conda setting. You may title the setting (e.g., llm_translation) and specify the Python model you need to use (e.g., Python 3.9):

conda create -n bash_helper python=3.9

- Activate the brand new setting:

conda activate bash_helper- Set up the required libraries:

- Ollama: It’s an open-source undertaking that serves as a robust and user-friendly platform for working LLMs in your native machine. It acts as a bridge between the complexities of LLM expertise and the need for an accessible and customizable AI expertise. Set up ollama by following the directions at https://github.com/ollama/ollama/blob/most important/docs/linux.md and likewise run:

pip set up ollama- To start out ollama and set up LLaMa 3.1 8B as our LLM (one can use different fashions) utilizing ollama, run the next instructions after ollama is put in:

ollama serveRun this in a background terminal. After which execute the next code to put in the llama3.1 utilizing ollama:

ollama run llama3.1Listed below are a number of the LLMs that Ollama helps – one can select based mostly on their necessities

| Mannequin | Parameters | Measurement | Obtain |

|---|---|---|---|

| Llama 3.2 | 3B | 2.0GB | ollama run llama3.2 |

| Llama 3.2 | 1B | 1.3GB | ollama run llama3.2:1b |

| Llama 3.1 | 8B | 4.7GB | ollama run llama3.1 |

| Llama 3.1 | 70B | 40GB | ollama run llama3.1:70b |

| Llama 3.1 | 405B | 231GB | ollama run llama3.1:405b |

| Phi 3 Mini | 3.8B | 2.3GB | ollama run phi3 |

| Phi 3 Medium | 14B | 7.9GB | ollama run phi3:medium |

| Gemma 2 | 2B | 1.6GB | ollama run gemma2:2b |

| Gemma 2 | 9B | 5.5GB | ollama run gemma2 |

| Gemma 2 | 27B | 16GB | ollama run gemma2:27b |

| Mistral | 7B | 4.1GB | ollama run mistral |

| Moondream 2 | 1.4B | 829MB | ollama run moondream2 |

| Neural Chat | 7B | 4.1GB | ollama run neural-chat |

| Starling | 7B | 4.1GB | ollama run starling-lm |

| Code Llama | 7B | 3.8GB | ollama run codellama |

| Llama 2 Uncensored | 7B | 3.8GB | ollama run llama2-uncensored |

| LLAVA | 7B | 4.5GB | ollama run llava |

| Photo voltaic | 10.7B | 6.1GB | ollama run photo voltaic |

- Pyperclip: It’s a Python library designed for cross-platform clipboard manipulation. It permits you to programmatically copy and paste textual content to and from the clipboard, making it straightforward to handle textual content picks.

pip set up pyperclip- Pynput: Pynput is a Python library that gives a option to monitor and management enter gadgets, reminiscent of keyboards and mice. It permits you to pay attention for particular key presses and execute features in response.

pip set up pynputCode sections:

Create a python file “helper.py” the place all the next code can be added:

- Importing the Required Libraries: Within the helper.py file, begin by importing the required libraries:

import pyperclip

import subprocess

import threading

import ollama

from pynput import keyboard- Defining the CommandAssistant Class: The

CommandAssistantclass is the core of the applying. When initialized, it begins a keyboard listener utilizingpynputto detect keypresses. The listener repeatedly screens for the F6 key, which serves because the set off for the assistant to course of a activity description. This setup ensures the applying runs passively within the background till activated by the consumer.

class CommandAssistant:

def __init__(self):

# Begin listening for key occasions

self.listener = keyboard.Listener(on_press=self.on_key_press)

self.listener.begin()- Dealing with the F6 Keypress: The

on_key_presstechnique is executed every time a secret’s pressed. It checks if the pressed secret’s F6. If that’s the case, it calls theprocess_task_descriptiontechnique to start out the workflow for producing a Linux command. Any invalid key presses are safely ignored, guaranteeing this system operates easily.

def on_key_press(self, key):

strive:

if key == keyboard.Key.f6:

# Set off command era on F6

print("Processing activity description...")

self.process_task_description()

besides AttributeError:

go

- Extracting Activity Description: This technique begins by simulating the “Ctrl+Shift+C” keypress utilizing

xdotoolto repeat chosen textual content from the terminal. The copied textual content, assumed to be a activity description, is then retrieved from the clipboard throughpyperclip. A immediate is constructed to instruct the Llama mannequin to generate a single Linux command for the given activity. To maintain the applying responsive, the command era is run in a separate thread, guaranteeing the primary program stays non-blocking.

def process_task_description(self):

# Step 1: Copy the chosen textual content utilizing Ctrl+Shift+C

subprocess.run(['xdotool', 'key', '--clearmodifiers', 'ctrl+shift+c'])

# Get the chosen textual content from clipboard

task_description = pyperclip.paste()

# Arrange the command-generation immediate

immediate = (

"You're a Linux terminal assistant. Convert the next description of a activity "

"right into a single Linux command that accomplishes it. Present solely the command, "

"with none extra textual content or surrounding quotes:nn"

f"Activity description: {task_description}"

)

# Step 2: Run command era in a separate thread

threading.Thread(goal=self.generate_command, args=(immediate,)).begin()

- Producing the Command: The

generate_commandtechnique sends the constructed immediate to the Llama mannequin (llama3.1) through theollamalibrary. The mannequin responds with a generated command, which is then cleaned to take away any pointless quotes or formatting. The sanitized command is handed to thereplace_with_commandtechnique for pasting again into the terminal. Any errors throughout this course of are caught and logged to make sure robustness.

def generate_command(self, immediate):

strive:

# Question the Llama mannequin for the command

response = ollama.generate(mannequin="llama3.1", immediate=immediate)

generated_command = response['response'].strip()

# Take away any surrounding quotes (if current)

if generated_command.startswith("'") and generated_command.endswith("'"):

generated_command = generated_command[1:-1]

elif generated_command.startswith('"') and generated_command.endswith('"'):

generated_command = generated_command[1:-1]

# Step 3: Substitute the chosen textual content with the generated command

self.replace_with_command(generated_command)

besides Exception as e:

print(f"Command era error: {str(e)}")

- Changing Textual content within the Terminal: The

replace_with_commandtechnique takes the generated command and copies it to the clipboard utilizingpyperclip. It then simulates keypresses to clear the terminal enter utilizing “Ctrl+C” and “Ctrl+L” and pastes the generated command again into the terminal with “Ctrl+Shift+V.” This automation ensures the consumer can instantly evaluate or execute the prompt command with out guide intervention.

def replace_with_command(self, command):

# Copy the generated command to the clipboard

pyperclip.copy(command)

# Step 4: Clear the present enter utilizing Ctrl+C

subprocess.run(['xdotool', 'key', '--clearmodifiers', 'ctrl+c'])

subprocess.run(['xdotool', 'key', '--clearmodifiers', 'ctrl+l'])

# Step 5: Paste the generated command utilizing Ctrl+Shift+V

subprocess.run(['xdotool', 'key', '--clearmodifiers', 'ctrl+shift+v'])

- Working the Software: The script creates an occasion of the

CommandAssistantclass and retains it working in an infinite loop to repeatedly pay attention for the F6 key. This system terminates gracefully upon receiving a KeyboardInterrupt (e.g., when the consumer presses Ctrl+C), guaranteeing clear shutdown and releasing system assets.

if __name__ == "__main__":

app = CommandAssistant()

# Hold the script working to pay attention for key presses

strive:

whereas True:

go

besides KeyboardInterrupt:

print("Exiting Command Assistant.")

Save all of the above parts as ‘helper.py’ file and run the applying utilizing the next command:

python helper.pyAnd that is it! You have now constructed the One-Click on LLM Bash Helper. Let’s stroll by way of methods to use it.

Workflow

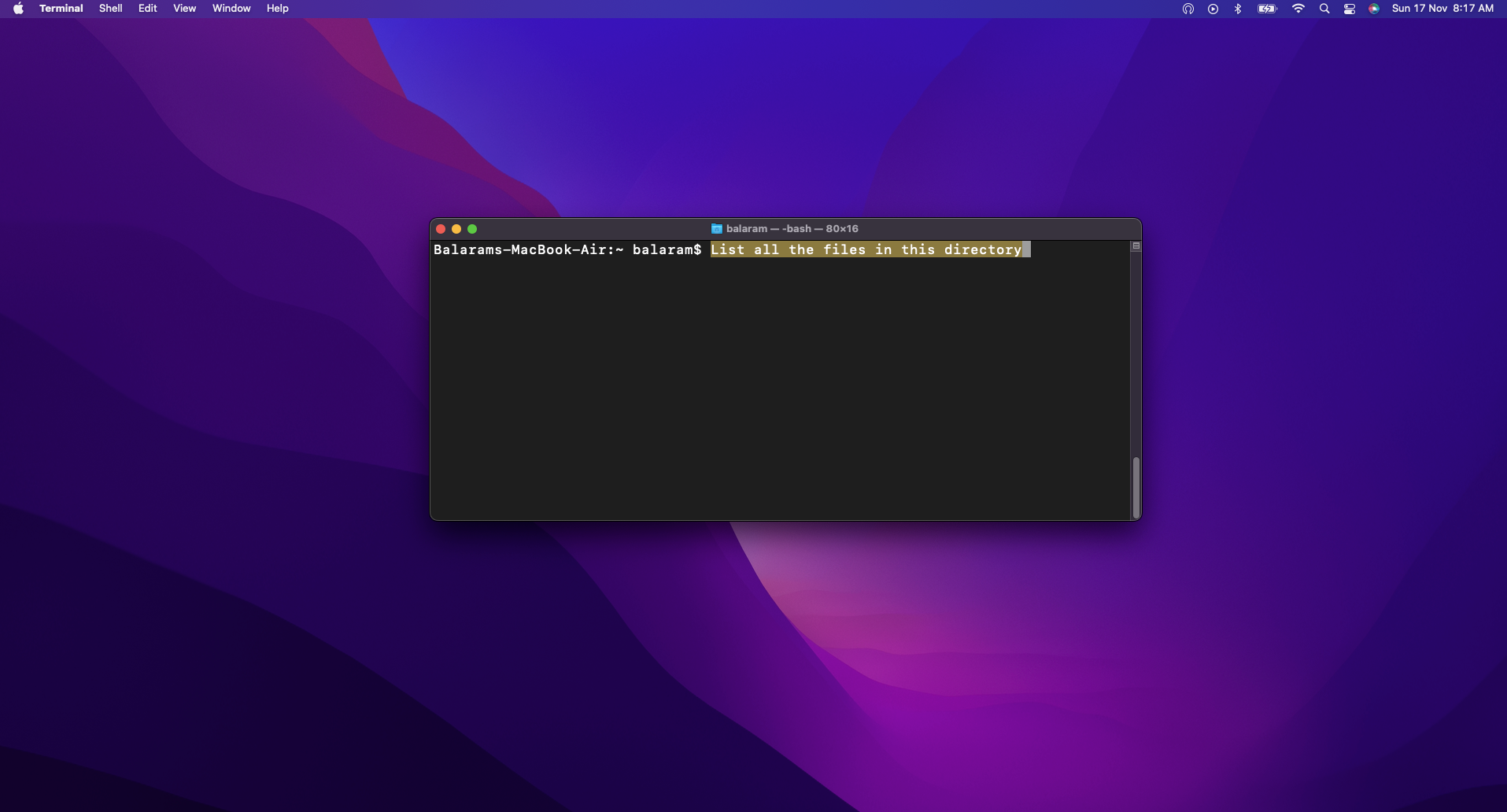

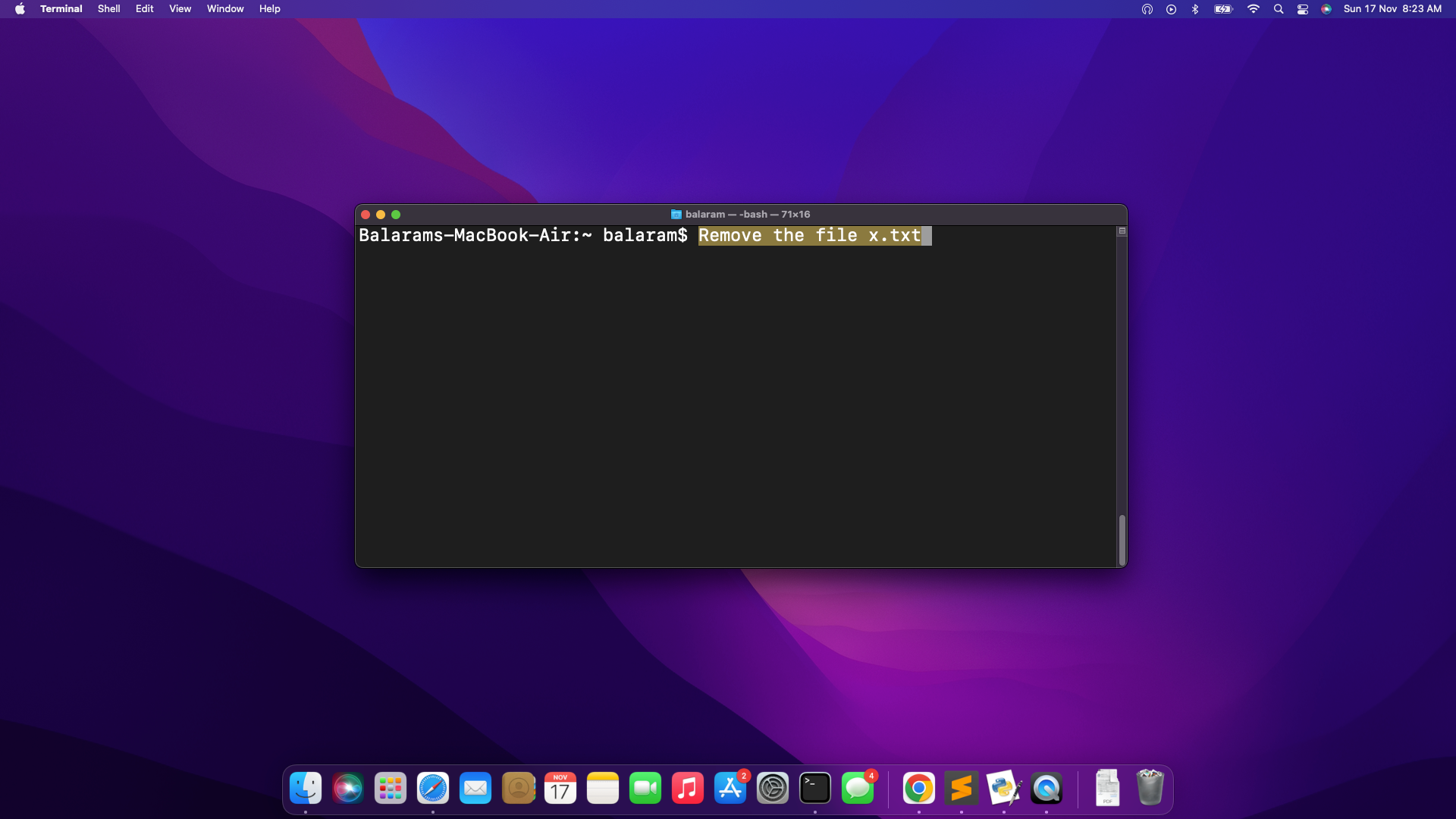

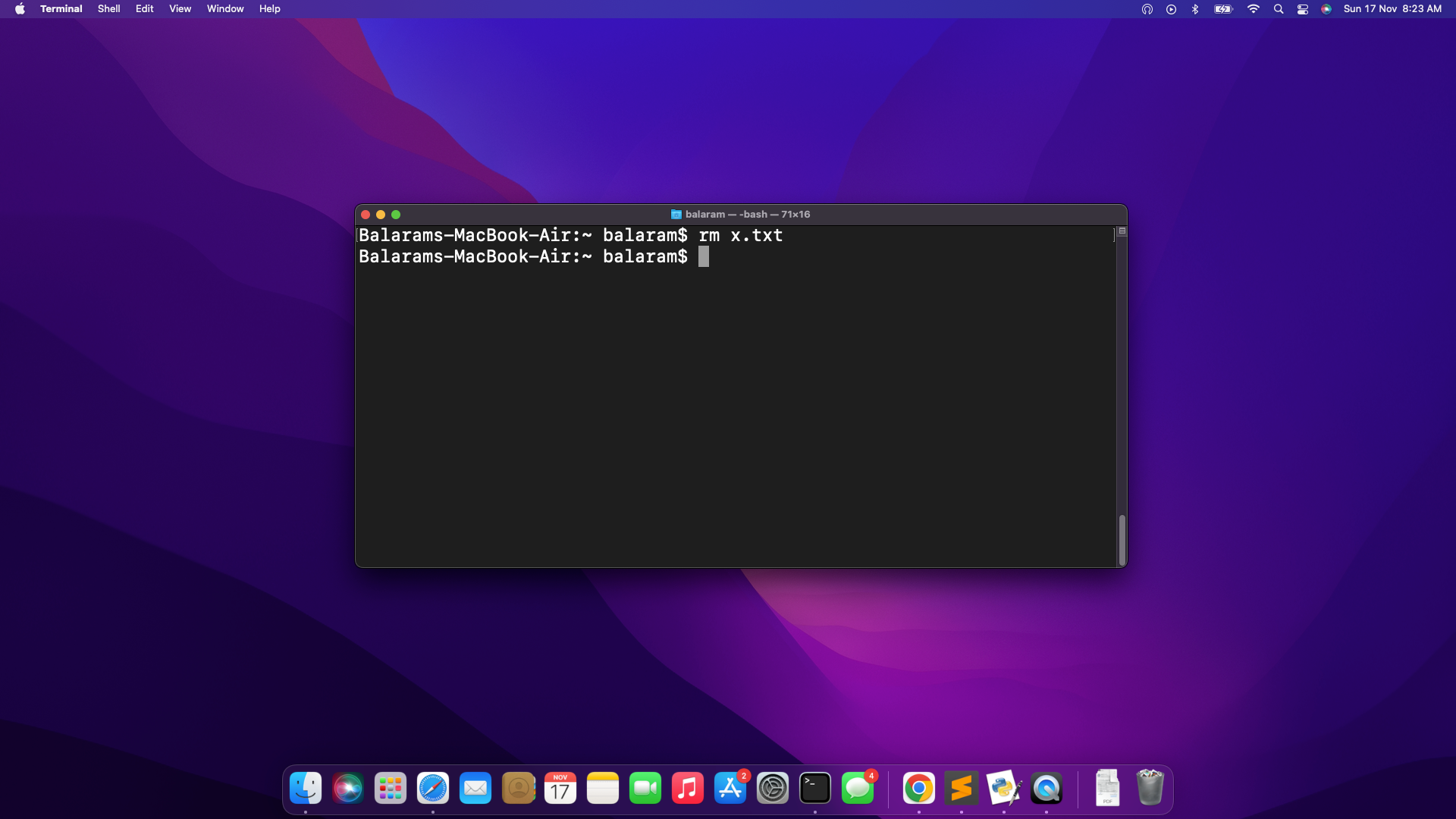

Open terminal and write the outline of any command to carry out. After which observe the under steps:

- Choose Textual content: After writing the outline of the command it’s essential carry out within the terminal, choose the textual content.

- Set off Translation: Press the F6 key to provoke the method.

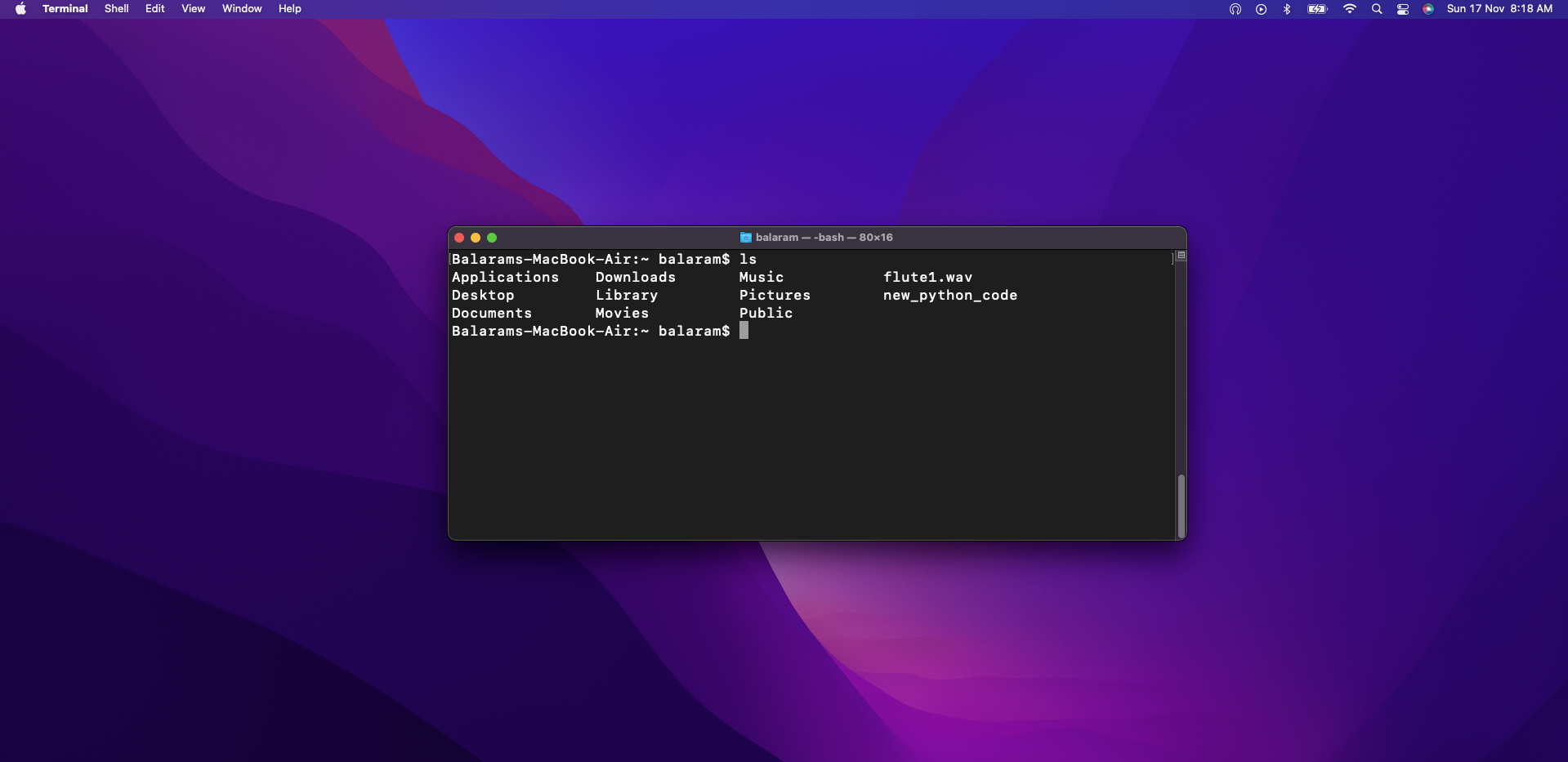

- View Consequence: The LLM finds the right code to execute for the command description given by the consumer and substitute the textual content within the bash terminal. Which is then routinely executed.

As on this case, for the outline – “Record all of the information on this listing” the command given as output from the LLM was -“ls”.

For entry to the whole code and additional particulars, please go to this GitHub repo hyperlink.

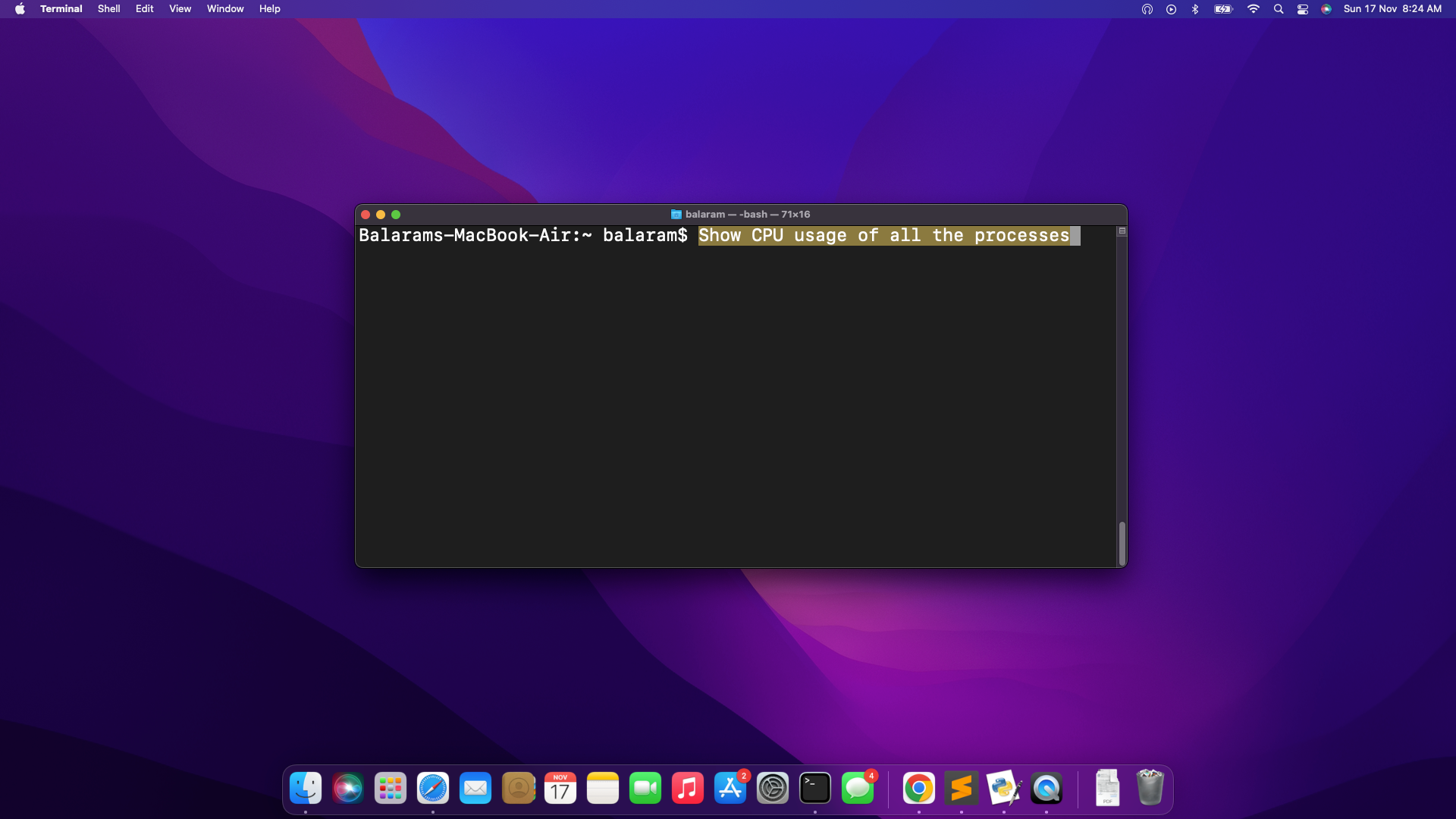

Listed below are just a few extra examples of the One-Click on LLM Bash Helper in motion:

It gave the code “prime” upon urgent the set off key (F6) and after execution it gave the next output:

- Deleting a file with filename

Suggestions for customizing the assistant

- Selecting the Proper Mannequin for Your System: Choosing the right language mannequin first.

Received a Highly effective PC? (16GB+ RAM)

- Use llama2:70b or mixtral – They provide wonderful high quality code era however want extra compute energy.

- Good for skilled use or when accuracy is essential

Working on a Mid-Vary System? (8-16GB RAM)

- Use llama2:13b or mistral – They provide an excellent steadiness of efficiency and useful resource utilization.

- Nice for day by day use and most era wants

Working with Restricted Sources? (4-8GB RAM)

- llama2:7b or phi are good on this vary.

- They’re sooner and lighter however nonetheless get the job executed

Though these fashions are really useful, one can use different fashions in response to their wants.

- Personalizing Keyboard Shortcut : Need to change the F6 key? One can change it to any key! For instance to make use of ‘T’ for translate, or F2 as a result of it is simpler to achieve. It is tremendous straightforward to alter – simply modify the set off key within the code, and it is good to go.

- Customising the Assistant: Perhaps as an alternative of bash helper, one wants assist with writing code in a sure programming language (Java, Python, C++). One simply wants to change the command era immediate. As a substitute of linux terminal assistant change it to python code author or to the programming language most well-liked.

Limitations

- Useful resource Constraints: Working giant language fashions typically requires substantial {hardware}. For instance, a minimum of 8 GB of RAM is required to run the 7B fashions, 16 GB to run the 13B fashions, and 32 GB to run the 33B fashions.

- Platform Restrictions: Using

xdotooland particular key mixtures makes the software depending on Linux programs and should not work on different working programs with out modifications. - Command Accuracy: The software could often produce incorrect or incomplete instructions, particularly for ambiguous or extremely particular duties. In such instances, utilizing a extra superior LLM with higher contextual understanding could also be mandatory.

- Restricted Customization: With out specialised fine-tuning, generic LLMs may lack contextual changes for industry-specific terminology or user-specific preferences.

For duties like extracting data from paperwork, instruments reminiscent of Nanonets’ Chat with PDF have evaluated and used a number of LLMs like Ministral and may provide a dependable option to work together with content material, guaranteeing correct knowledge extraction with out threat of misrepresentation.