Z.ai is out with its next-generation flagship AI mannequin and has named it GLM-5.1. With its mixture of in depth mannequin measurement, operational effectivity, and superior reasoning features, the mannequin represents a serious step ahead in massive language fashions. The system improves upon earlier GLM fashions by introducing a sophisticated Combination-of-Specialists framework, which allows it to carry out intricate multi-step operations sooner, with extra exact outcomes.

GLM-5.1 can be highly effective due to its help for the event of agent-based techniques that require superior reasoning capabilities. The mannequin even presents new options that improve each coding capabilities and long-context understanding. All of this influences precise AI functions and builders’ working processes.

This leaves no room for doubt that the launch of the GLM-5.1 is a vital replace. Right here, we deal with simply that, and study all in regards to the new GLM-5.1 and its capabilities.

GLM-5.1 Mannequin Structure Parts

GLM-5.1 builds on trendy LLM design ideas by combining effectivity, scalability, and long-context dealing with right into a unified structure. It helps in sustaining operational effectivity by way of its means to deal with as much as 100 billion parameters. This allows sensible efficiency in day-to-day operations.

The system makes use of a hybrid consideration mechanism along with an optimized decoding pipeline. This allows it to carry out successfully in duties that require dealing with prolonged paperwork, reasoning, and code technology.

Listed here are all of the elements that make up its structure:

- Combination-of-Specialists (MoE): The MoE mannequin has 744 billion parameters, which it divides between 256 specialists. The system implements top-8-routing, which allows eight specialists to work on every token, plus one professional that operates throughout all tokens. The system requires roughly 40 billion parameters for every token.

- Consideration: The system makes use of two kinds of consideration strategies. These embrace Multi-head Latent Consideration and DeepSeek Sparse Consideration. The system can deal with as much as 200000 tokens, as its most capability reaches 202752 tokens. The KV-cache system makes use of compressed knowledge, which operates at LoRA rank 512 and head dimension 64 to boost system efficiency.

- Construction: The system accommodates 78 layers, which function at a hidden measurement of 6144. The primary three layers comply with a regular dense construction, whereas the next layers implement sparse MoE blocks.

- Speculative Decoding (MTP): The decoding course of turns into sooner by way of Speculative Decoding as a result of it makes use of a multi-token prediction head, which allows simultaneous prediction of a number of tokens.

GLM-5.1 achieves its massive scale and prolonged contextual understanding by way of these options, which want much less processing energy than an entire dense system.

Methods to Entry GLM-5.1

Builders can use GLM-5.1 in a number of methods. The entire mannequin weights can be found as open-source software program underneath the MIT license. The next checklist accommodates a few of the obtainable choices:

- Hugging Face (MIT license): Weights obtainable for obtain. The system wants enterprise GPU {hardware} as its minimal requirement.

- Z.ai API / Coding Plans: The service offers direct API entry at a price of roughly $1.00 per million tokens and $3.20 per million tokens. The system works with the present Claude and OpenAI system toolchains.

- Third-Celebration Platforms: The system features with inference engines, which embrace OpenRouter and SGLang that help preset GLM-5.1 fashions.

- Native Deployment: Customers with enough {hardware} sources can implement GLM-5.1 domestically by way of vLLM or SGLang instruments once they possess a number of B200 GPUs or equal {hardware}.

GLM-5.1 offers open weights and industrial API entry, which makes it obtainable to each enterprise companies and people. Notably for this weblog, we’ll use the Hugging Face token to entry this mannequin.

GLM-5.1 Benchmarks

Listed here are the assorted scores that GLM-5.1 has obtained throughout benchmarks.

Coding

GLM-5.1 exhibits distinctive means to finish programming assignments. Its coding efficiency achieved a rating of 58.4 on SWE-Bench Professional, surpassing each GPT-5.4 (57.7) and Claude Opus 4.6 (57.3). GLM-5.1 reached a rating above 55 throughout three coding checks, together with SWE-Bench Professional, Terminal-Bench 2.0, and CyberGym, to safe the third place worldwide behind GPT-5.4 (58.0) and Claude 4.6 (57.5) general. The system outperforms GLM-5 by a major margin, which exhibits its higher efficiency in coding duties with scores of 68.7 in comparison with 48.3. The brand new system permits GLM-5.1 to supply intricate code with better accuracy than earlier than.

Agentic

The GLM-5.1 helps agentic workflows, which embrace a number of steps that require each planning and code execution and power utilization. This technique shows vital progress throughout extended operational intervals. By way of its operation on the VectorDBBench optimization process, GLM-5.1 executed 655 iterations, which included greater than 6000 instrument features to find a number of algorithmic enhancements. Additionally maintains its growth monitor after reaching 1000 instrument utilization, which proves its means to maintain enhancing by way of sustained optimization.

- VectorDBBench: Achieved 21,500 QPS over 655 iterations (6× acquire) on an index optimization process.

- KernelBench: 3.6× ML efficiency acquire on GPU kernels vs 2.6× for GLM-5, persevering with previous 1000 turns.

- Self-debugging: Constructed an entire Linux desktop stack from scratch inside 8 hours (planning, testing, error-correction) as claimed by Z.ai.

Reasoning

GLM-5.1 offers glorious outcomes throughout normal reasoning checks and QA analysis checks. The system demonstrates efficiency outcomes that match main techniques used for normal intelligence evaluation.

GLM-5.1 achieved 95.3% on AIME, which is a sophisticated math competitors, and 86.2% on GPQA, which checks superior question-answering skills. The scores method the best efficiency of prime techniques, which embrace GPT-5.4 that achieved 98.7% and 94.3% in two separate assessments. GLM-5.1 demonstrates intensive educational capabilities, which lead to excessive achievement throughout a number of disciplines and Olympiad competitions.

GLM-5.1 Capabilities

GLM-5.1 performs exceptionally nicely in three particular duties – long-term planning, code technology, and multi-turn logical reasoning. The software program can produce and debug laptop code, whereas it could possibly remedy troublesome inquiries and execute complicated duties with glorious outcomes. The system allows builders to assemble “brokers” that use its operate calling and structured output capabilities to work together with varied instruments. The 2 duties proven right here reveal the system’s programming capabilities. The system permits customers to unravel issues by way of prompts, resulting in correct options that incessantly embrace code.

Job 1: Discovering the Factorial of a Giant Quantity

Immediate:

“Hello, you’re a wonderful code teacher, so assist me with this query.

Q. Write Python code for locating the Factorial of a Giant Quantity.”

Response by GLM-5.1:

def factorial_large(n: int) -> str:

if n < 0: return ""

if n == 0: return "1"

# Array to retailer particular person digits (saved in reverse order for simpler carry dealing with)

res = [1]

for x in vary(2, n + 1):

carry = 0

# Multiply x with each digit within the present consequence

for i in vary(len(res)):

product = res[i] * x + carry

res[i] = product % 10

carry = product // 10

# Append the remaining carry to the consequence array

whereas carry:

res.append(carry % 10)

carry //= 10

# The digits are saved in reverse order, so reverse and be part of them

return "".be part of(map(str, res[::-1]))Output:

Verdict: The system exhibits environment friendly functioning as a result of it follows a two-pointer approach, offering the very best efficiency outcomes. The answer makes use of minimal overhead sources whereas implementing efficient helper features. The implementation exists in a brief format, missing full documentation and commentary. This makes it extra appropriate for fixing issues quickly as an alternative of utilizing it in manufacturing environments, which want readable and maintainable code.

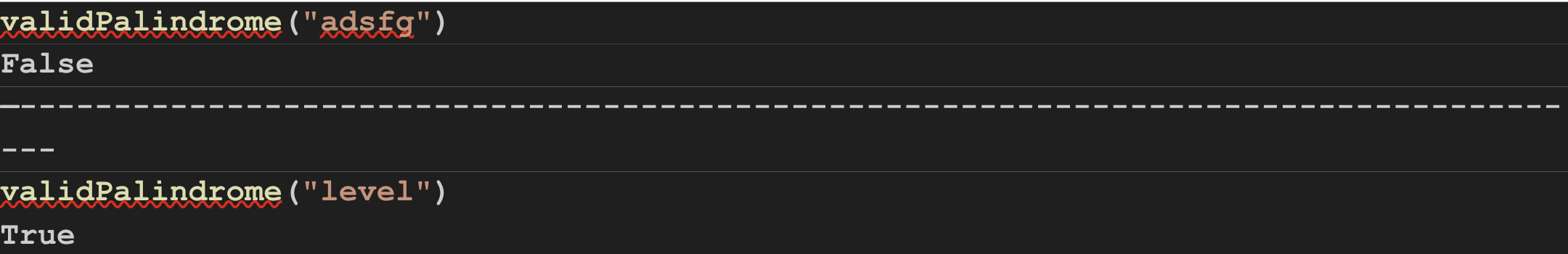

Job 2: Checking if a String is a Palindrome

Immediate:

“Hello, you’re a wonderful code teacher, so assist me with this query.

Q. Given a string s, return true if s could be a palindrome after deleting at most one character from it.”

Response by GLM-5.1:

def validPalindrome(s: str) -> bool:

def is_palindrome(l: int, r: int) -> bool:

whereas l < r:

if s[l] != s[r]:

return False

l += 1

r -= 1

return True

left, proper = 0, len(s) - 1

whereas left < proper:

if s[left] != s[right]:

return is_palindrome(left + 1, proper) or is_palindrome(left, proper - 1)

left += 1

proper -= 1

return TrueOutput:

Verdict: The response from GLM-5.1 exhibits environment friendly efficiency mixed with technical validity. It exhibits competence in executing intensive numerical operations by way of guide digit processing. The system achieves its design objectives by way of its iterative methodology, which mixes efficiency with appropriate output. The implementation exists in a brief format and offers restricted documentation by way of fundamental error dealing with. This makes the code applicable for algorithm growth however unsuitable for manufacturing utilization as a result of that surroundings requires clear, extendable, and robust efficiency.

Total Assessment of GLM-5.1 Capabilities

GLM-5.1 offers a number of functions by way of its open-source infrastructure and its refined system design. This allows builders to create deep reasoning capabilities, code technology features, and power utilization techniques. The system maintains all present GLM household strengths by way of sparse MoE and lengthy context capabilities. It additionally introduces new features that permit for adaptive considering and debugging loop execution. By way of its open weights and low-cost API choices, the system affords entry to analysis whereas supporting sensible functions in software program engineering and different fields.

Conclusion

The GLM-5.1 is a stay instance of how present AI techniques develop their effectivity and scalability, whereas additionally enhancing their reasoning capabilities. It ensures a excessive efficiency with its Combination-of-Specialists structure, whereas sustaining an affordable operational value. Total, this method allows the dealing with of precise AI functions that require intensive operations.

As AI heads in direction of agent-based techniques and prolonged contextual understanding, GLM-5.1 establishes a base for future growth. Its routing system and a spotlight mechanism, along with its multi-token prediction system, create new potentialities for upcoming massive language fashions.

Login to proceed studying and luxuriate in expert-curated content material.