Generative AI’s present trajectory depends closely on Latent Diffusion Fashions (LDMs) to handle the computational price of high-resolution synthesis. By compressing information right into a lower-dimensional latent house, fashions can scale successfully. Nevertheless, a basic trade-off persists: decrease data density makes latents simpler to be taught however sacrifices reconstruction high quality, whereas increased density permits near-perfect reconstruction however calls for higher modeling capability.

Google DeepMind researchers have launched Unified Latents (UL), a framework designed to navigate this trade-off systematically. The framework collectively regularizes latent representations with a diffusion prior and decodes them by way of a diffusion mannequin.

The Structure: Three Pillars of Unified Latents

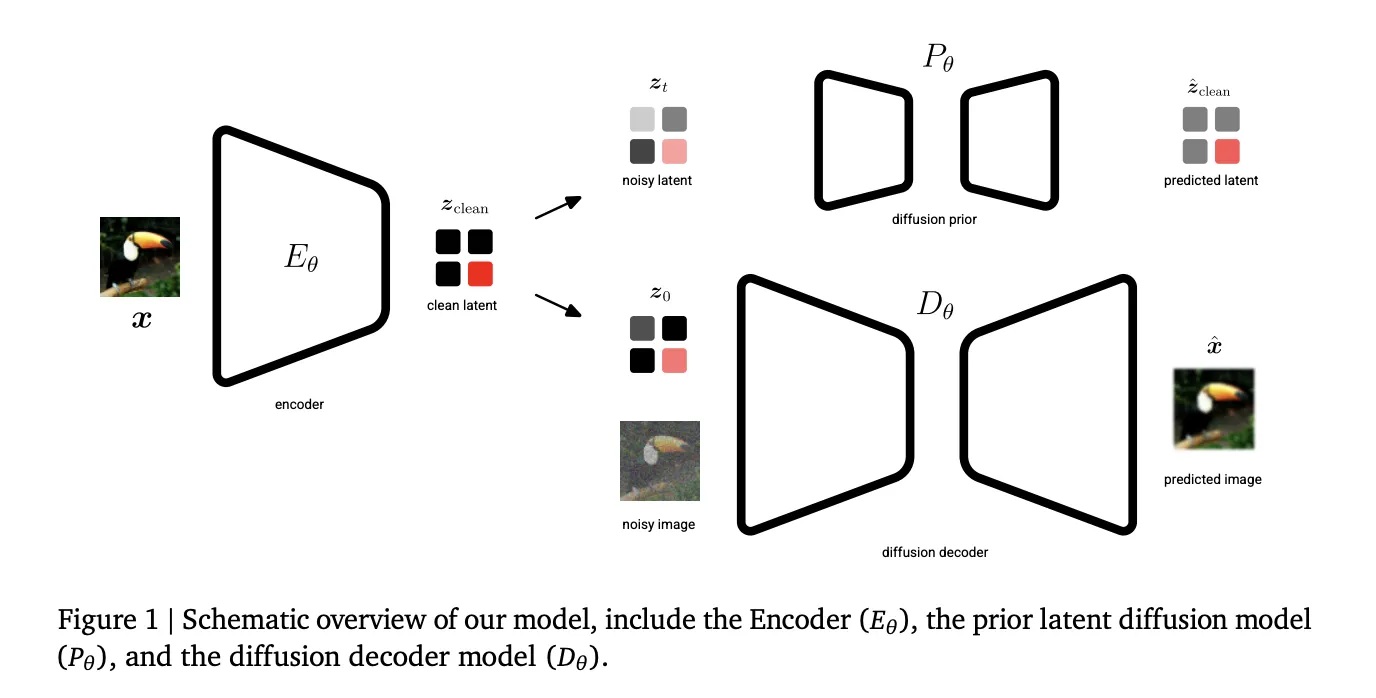

The Unified Latents (UL) framework rests on three particular technical parts:

- Fastened Gaussian Noise Encoding: Not like commonplace Variational Autoencoders (VAEs) that be taught an encoder distribution, UL makes use of a deterministic encoder E𝝷 that predicts a single latent zclear. This latent is then forward-noised to a ultimate log signal-to-noise ratio (log-SNR) of λ(0)=5.

- Prior-Alignment: The prior diffusion mannequin is aligned with this minimal noise degree. This alignment permits the Kullback-Leibler (KL) time period within the Proof Decrease Sure (ELBO) to scale back to a easy weighted Imply Squared Error (MSE) over noise ranges.

- Reweighted Decoder ELBO: The decoder makes use of a sigmoid-weighted loss, which gives an interpretable certain on the latent bitrate whereas permitting the mannequin to prioritize completely different noise ranges.

The Two-Stage Coaching Course of

The UL framework is carried out in two distinct phases to optimize each latent studying and era high quality.

Stage 1: Joint Latent Studying

Within the first stage, the encoder, diffusion prior (P𝝷), and diffusion decoder (D𝝷) are educated collectively. The target is to be taught latents which might be concurrently encoded, regularized, and modeled. The encoder’s output noise is linked on to the prior’s minimal noise degree, offering a good higher certain on the latent bitrate.

Stage 2: Base Mannequin Scaling

The analysis group discovered {that a} prior educated solely on an ELBO loss in Stage 1 doesn’t produce optimum samples as a result of it weights low-frequency and high-frequency content material equally. Consequently, in Stage 2, the encoder and decoder are frozen. A brand new ‘base mannequin’ is then educated on the latents utilizing a sigmoid weighting, which considerably improves efficiency. This stage permits for bigger mannequin sizes and batch sizes.

Technical Efficiency and SOTA Benchmarks

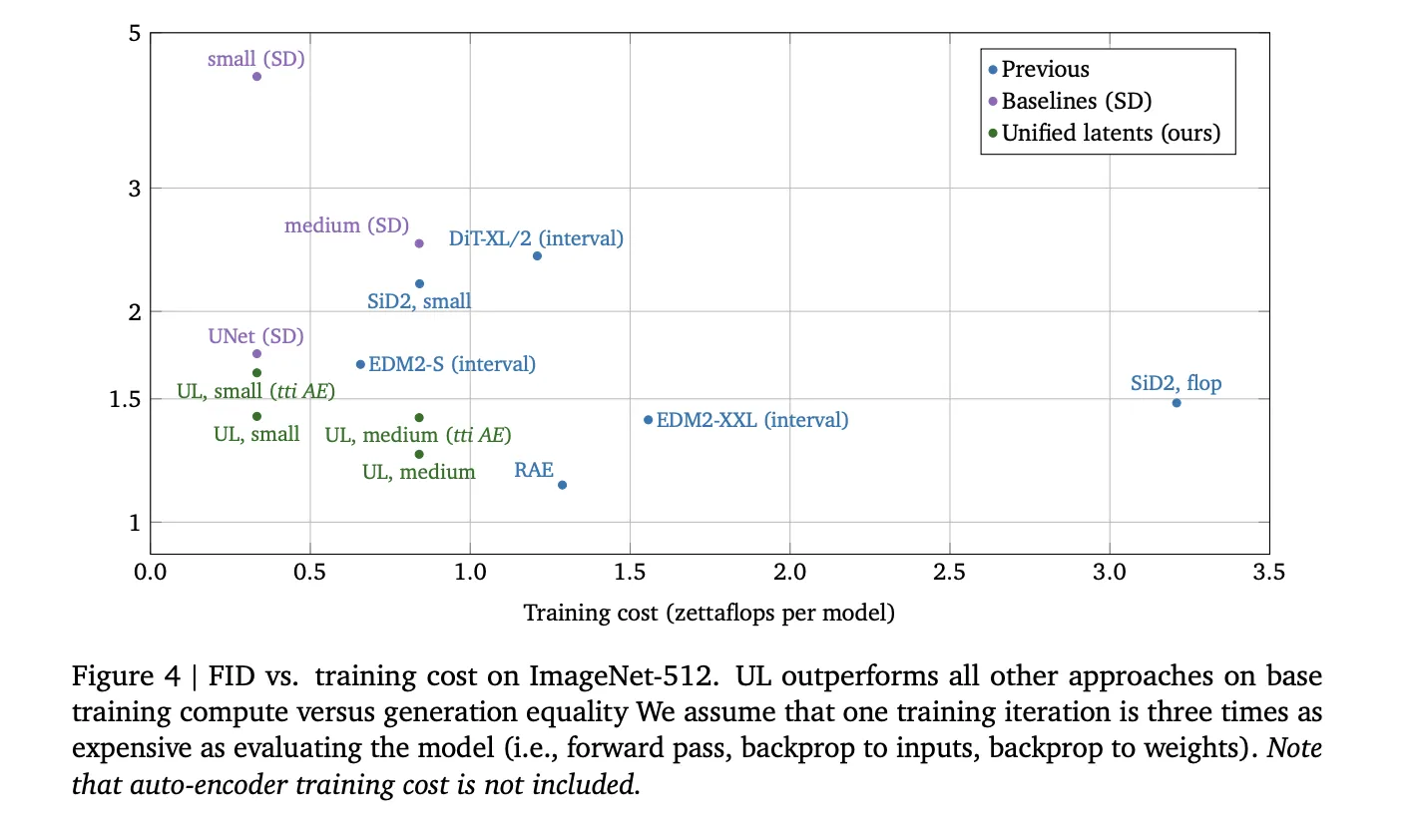

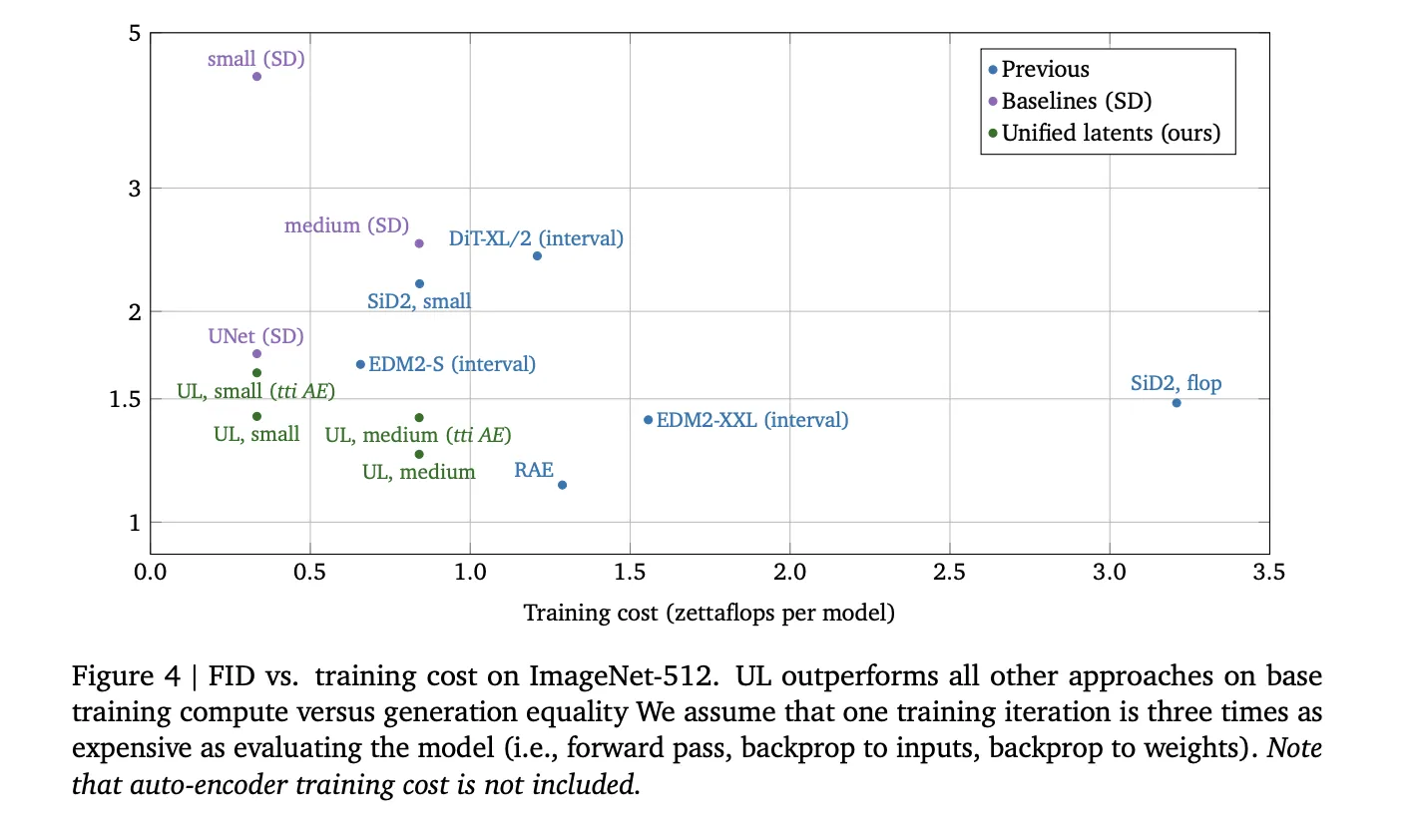

Unified Latents display excessive effectivity within the relationship between coaching compute (FLOPs) and era high quality.

| Metric | Dataset | Consequence | Significance |

| FID | ImageNet-512 | 1.4 | Outperforms fashions educated on Secure Diffusion latents for a given compute funds. |

| FVD | Kinetics-600 | 1.3 | Units a brand new State-of-the-Artwork (SOTA) for video era. |

| PSNR | ImageNet-512 | As much as 30.1 | Maintains excessive reconstruction constancy even at increased compression ranges. |

On ImageNet-512, UL outperformed earlier approaches, together with DiT and EDM2 variants, by way of coaching price versus era FID. In video duties utilizing Kinetics-600, a small UL mannequin achieved a 1.7 FVD, whereas the medium variant reached the SOTA 1.3 FVD.

Key Takeaways

- Built-in Diffusion Framework: UL is a framework that collectively optimizes an encoder, a diffusion prior, and a diffusion decoder, guaranteeing that latent representations are concurrently encoded, regularized, and modeled for high-efficiency era.

- Fastened-Noise Info Sure: By utilizing a deterministic encoder that provides a hard and fast quantity of Gaussian noise (particularly at a log-SNR of λ(0)=5) and linking it to the prior’s minimal noise degree, the mannequin gives a good, interpretable higher certain on the latent bitrate.

- Two-Stage Coaching Technique: The method includes an preliminary joint coaching stage for the autoencoder and prior, adopted by a second stage the place the encoder and decoder are frozen and a bigger ‘base mannequin’ is educated on the latents to maximise pattern high quality.

- State-of-the-Artwork Efficiency: The framework established a brand new state-of-the-art (SOTA) Fréchet Video Distance (FVD) of 1.3 on Kinetics-600 and achieved a aggressive Fréchet Inception Distance (FID) of 1.4 on ImageNet-512 whereas requiring fewer coaching FLOPs than commonplace latent diffusion baselines.

Try the Paper. Additionally, be at liberty to comply with us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be a part of us on telegram as nicely.