Picture by Writer

# Introduction

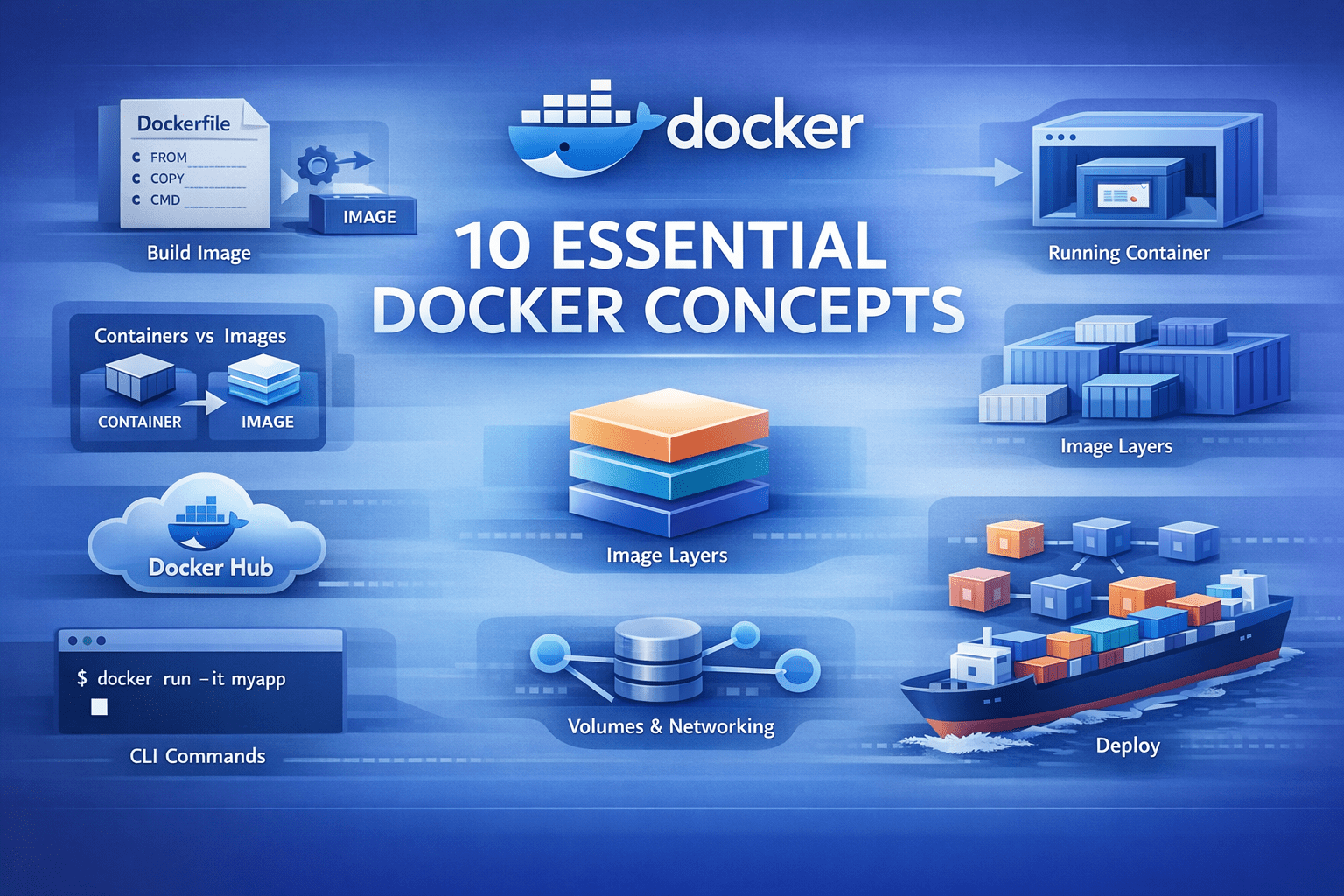

Docker has simplified how we construct and deploy purposes. However if you find yourself getting began studying Docker, the terminology can usually be complicated. You’ll seemingly hear phrases like “pictures,” “containers,” and “volumes” with out actually understanding how they match collectively. This text will enable you perceive the core Docker ideas it’s worthwhile to know.

Let’s get began.

# 1. Docker Picture

A Docker picture is an artifact that incorporates all the things your utility must run: the code, runtime, libraries, surroundings variables, and configuration information.

Photographs are immutable. When you create a picture, it doesn’t change. This ensures your utility runs the identical approach in your laptop computer, your coworker’s machine, and in manufacturing, eliminating environment-specific bugs.

Right here is the way you construct a picture from a Dockerfile. A Dockerfile is a recipe that defines the way you construct the picture:

docker construct -t my-python-app:1.0 .

The -t flag tags your picture with a reputation and model. The . tells Docker to search for a Dockerfile within the present listing. As soon as constructed, this picture turns into a reusable template on your utility.

# 2. Docker Container

A container is what you get while you run a picture. It’s an remoted surroundings the place your utility truly executes.

docker run -d -p 8000:8000 my-python-app:1.0

The -d flag runs the container within the background. The -p 8000:8000 maps port 8000 in your host to port 8000 within the container, making your app accessible at localhost:8000.

You possibly can run a number of containers from the identical picture. They function independently. That is the way you take a look at completely different variations concurrently or scale horizontally by working ten copies of the identical utility.

Containers are light-weight. Not like digital machines, they don’t boot a full working system. They begin in seconds and share the host’s kernel.

# 3. Dockerfile

A Dockerfile incorporates directions for constructing a picture. It’s a textual content file that tells Docker precisely methods to arrange your utility surroundings.

Here’s a Dockerfile for a Flask utility:

FROM python:3.11-slim

WORKDIR /app

COPY necessities.txt .

RUN pip set up --no-cache-dir -r necessities.txt

COPY . .

EXPOSE 8000

CMD ["python", "app.py"]

Let’s break down every instruction:

FROM python:3.11-slim— Begin with a base picture that has Python 3.11 put in. The slim variant is smaller than the usual picture.WORKDIR /app— Set the working listing to /app. All subsequent instructions run from right here.COPY necessities.txt .— Copy simply the necessities file first, not all of your code but.RUN pip set up --no-cache-dir -r necessities.txt— Set up Python dependencies. The –no-cache-dir flag retains the picture dimension smaller.COPY . .— Now copy the remainder of your utility code.EXPOSE 8000— Doc that the app makes use of port 8000.CMD ["python", "app.py"]— Outline the command to run when the container begins.

The order of those directions is vital for a way lengthy your builds take, which is why we have to perceive layers.

# 4. Picture Layers

Each instruction in a Dockerfile creates a brand new layer. These layers stack on high of one another to type the ultimate picture.

Docker caches every layer. Whenever you rebuild a picture, Docker checks if every layer must be recreated. If nothing modified, it reuses the cached layer as a substitute of rebuilding.

That is why we copy necessities.txt earlier than copying all the utility. Your dependencies change much less continuously than your code. Whenever you modify app.py, Docker reuses the cached layer that put in dependencies and solely rebuilds layers after the code copy.

Right here is the layer construction from our Dockerfile:

- Base Python picture (

FROM) - Set working listing (

WORKDIR) - Copy

necessities.txt(COPY) - Set up dependencies (

RUN pip set up) - Copy utility code (

COPY) - Metadata about port (

EXPOSE) - Default command (

CMD)

When you solely change your Python code, Docker rebuilds solely layers 5–7. Layers 1–4 come from cache, making builds a lot quicker. Understanding layers helps you write environment friendly Dockerfiles. Put frequently-changing information on the finish and secure dependencies firstly.

# 5. Docker Volumes

Containers are non permanent. Whenever you delete a container, all the things inside disappears, together with information your utility created.

Docker volumes remedy this downside. They’re directories that exist outdoors the container filesystem and persist after the container is eliminated.

docker run -d

-v postgres-data:/var/lib/postgresql/information

postgres:15

This creates a named quantity referred to as postgres-data and mounts it at /var/lib/postgresql/information contained in the container. Your database information survive container restarts and deletions.

You can too mount directories out of your host machine, which is helpful throughout improvement:

docker run -d

-v $(pwd):/app

-p 8000:8000

my-python-app:1.0

This mounts your present listing into the container at /app. Modifications you make to information in your host seem instantly within the container, enabling stay improvement with out rebuilding the picture.

There are three sorts of mounts:

- Named volumes (

postgres-data:/path) — Managed by Docker, greatest for manufacturing information - Bind mounts (

/host/path:/container/path) — Mount any host listing, good for improvement - tmpfs mounts — Retailer information in reminiscence solely, helpful for non permanent information

# 6. Docker Hub

Docker Hub is a public registry the place individuals share Docker pictures. Whenever you write FROM python:3.11-slim, Docker pulls that picture from Docker Hub.

You possibly can seek for pictures:

And pull them to your machine:

docker pull redis:7-alpine

You can too push your individual pictures to share with others or deploy to servers:

docker tag my-python-app:1.0 username/my-python-app:1.0

docker push username/my-python-app:1.0

Docker Hub hosts official pictures for widespread software program like PostgreSQL, Redis, Nginx, Python, and hundreds extra. These are maintained by the software program creators and comply with greatest practices.

For personal tasks, you possibly can create personal repositories on Docker Hub or use different registries like Amazon Elastic Container Registry (ECR), Google Container Registry (GCR), or Azure Container Registry (ACR).

# 7. Docker Compose

Actual purposes want a number of companies. A typical net app has a Python backend, a PostgreSQL database, a Redis cache, and possibly a employee course of.

Docker Compose allows you to outline all these companies in a single But One other Markup Language (YAML) file and handle them collectively.

Create a docker-compose.yml file:

model: '3.8'

companies:

net:

construct: .

ports:

- "8000:8000"

surroundings:

- DATABASE_URL=postgresql://postgres:secret@db:5432/myapp

- REDIS_URL=redis://cache:6379

depends_on:

- db

- cache

volumes:

- .:/app

db:

picture: postgres:15-alpine

volumes:

- postgres-data:/var/lib/postgresql/information

surroundings:

- POSTGRES_PASSWORD=secret

- POSTGRES_DB=myapp

cache:

picture: redis:7-alpine

volumes:

postgres-data:

Now begin your complete utility stack with one command:

This begins three containers: net, db, and cache. Docker Compose handles networking mechanically: the online service can attain the database at hostname db and Redis at hostname cache.

To cease all the things, run:

To rebuild after code modifications:

docker-compose up -d --build

Docker Compose is important for improvement environments. As an alternative of putting in PostgreSQL and Redis in your machine, you run them in containers with one command.

# 8. Container Networks

Whenever you run a number of containers, they should discuss to one another. Docker creates digital networks that join containers.

By default, Docker Compose creates a community for all companies outlined in your docker-compose.yml. Containers use service names as hostnames. In our instance, the online container connects to PostgreSQL utilizing db:5432 as a result of db is the service title.

You can too create customized networks manually:

docker community create my-app-network

docker run -d --network my-app-network --name api my-python-app:1.0

docker run -d --network my-app-network --name cache redis:7

Now the api container can attain Redis at cache:6379. Docker offers a number of community drivers, of which you’ll use the next usually:

- bridge — Default community for containers on a single host

- host — Container makes use of the host’s community immediately (no isolation)

- none — Container has no community entry

Networks present isolation. Containers on completely different networks can’t talk until explicitly linked. That is helpful for safety as you possibly can separate your frontend, backend, and database networks.

To see all networks, run:

To examine a community and see which containers are linked, run:

docker community examine my-app-network

# 9. Setting Variables and Docker Secrets and techniques

Hardcoding configuration is asking for hassle. Your database password shouldn’t be the identical in improvement and manufacturing. Your API keys undoubtedly shouldn’t stay in your codebase.

Docker handles this by way of surroundings variables. Cross them in at runtime with the -e or --env flag, and your container will get the config it wants with out baking values into the picture.

Docker Compose makes this cleaner. Level to an .env file and preserve your secrets and techniques out of model management. Swap in .env.manufacturing while you deploy, or outline surroundings variables immediately in your compose file if they aren’t delicate.

Docker Secrets and techniques take this additional for manufacturing environments, particularly in Swarm mode. As an alternative of surroundings variables — which can present up in logs or course of listings — secrets and techniques are encrypted throughout transit and at relaxation, then mounted as information within the container. Solely companies that want them get entry. They’re designed for passwords, tokens, certificates, and anything that may be catastrophic if leaked.

The sample is straightforward: separate code from configuration. Use surroundings variables for normal config and secrets and techniques for delicate information.

# 10. Container Registry

Docker Hub works positive for public pictures, however you don’t want your organization’s utility pictures publicly obtainable. A container registry is personal storage on your Docker pictures. Fashionable choices embrace:

For every of the above choices, you possibly can comply with an analogous process to publish, pull, and use pictures. For instance, you’ll do the next with ECR.

Your native machine or steady integration and steady deployment (CI/CD) system first proves its id to ECR. This enables Docker to securely work together together with your personal picture registry as a substitute of a public one. The regionally constructed Docker picture is given a completely certified title that features:

- The AWS account registry handle

- The repository title

- The picture model

This step tells Docker the place the picture will stay in ECR. The picture is then uploaded to the personal ECR repository. As soon as pushed, the picture is centrally saved, versioned, and obtainable to approved techniques.

Manufacturing servers authenticate with ECR and obtain the picture from the personal registry. This retains your deployment pipeline quick and safe. As an alternative of constructing pictures on manufacturing servers (gradual and requires supply code entry), you construct as soon as, push to the registry, and pull on all servers.

Many CI/CD techniques combine with container registries. Your GitHub Actions workflow builds the picture, pushes it to ECR, and your Kubernetes cluster pulls it mechanically.

# Wrapping Up

These ten ideas type Docker’s basis. Right here is how they join in a typical workflow:

- Write a Dockerfile with directions on your app, and construct a picture from the Dockerfile

- Run a container from the picture

- Use volumes to persist information

- Set surroundings variables and secrets and techniques for configuration and delicate data

- Create a

docker-compose.ymlfor multi-service apps and let Docker networks join your containers - Push your picture to a registry, pull and run it anyplace

Begin by containerizing a easy Python script. Add dependencies with a necessities.txt file. Then introduce a database utilizing Docker Compose. Every step builds on the earlier ideas. Docker isn’t sophisticated when you perceive these fundamentals. It’s only a device that packages purposes persistently and runs them in remoted environments.

Pleased exploring!

Bala Priya C is a developer and technical author from India. She likes working on the intersection of math, programming, information science, and content material creation. Her areas of curiosity and experience embrace DevOps, information science, and pure language processing. She enjoys studying, writing, coding, and low! Presently, she’s engaged on studying and sharing her data with the developer neighborhood by authoring tutorials, how-to guides, opinion items, and extra. Bala additionally creates partaking useful resource overviews and coding tutorials.