Right this moment, AWS introduced Amazon Managed Workflows for Apache Airflow (MWAA) Serverless. This can be a new deployment possibility for MWAA that eliminates the operational overhead of managing Apache Airflow environments whereas optimizing prices via serverless scaling. This new providing addresses key challenges that information engineers and DevOps groups face when orchestrating workflows: operational scalability, price optimization, and entry administration.

With MWAA Serverless you possibly can focus in your workflow logic reasonably than monitoring for provisioned capability. Now you can submit your Airflow workflows for execution on a schedule or on demand, paying just for the precise compute time used throughout every job’s execution. The service robotically handles all infrastructure scaling in order that your workflows run effectively no matter load.

Past simplified operations, MWAA Serverless introduces an up to date safety mannequin for granular management via AWS Id and Entry Administration (IAM). Every workflow can now have its personal IAM permissions, operating on a VPC of your selecting so you possibly can implement exact safety controls with out creating separate Airflow environments. This method considerably reduces safety administration overhead whereas strengthening your safety posture.

On this put up, we reveal the best way to use MWAA Serverless to construct and deploy scalable workflow automation options. We stroll via sensible examples of making and deploying workflows, organising observability via Amazon CloudWatch, and changing current Apache Airflow DAGs (Directed Acyclic Graphs) to the serverless format. We additionally discover greatest practices for managing serverless workflows and present you the best way to implement monitoring and logging.

How does MWAA Serverless work?

MWAA Serverless processes your workflow definitions and executes them effectively in service-managed Airflow environments, robotically scaling sources primarily based on workflow calls for. MWAA Serverless makes use of the Amazon Elastic Container Service (Amazon ECS) executor to run every particular person job by itself ECS Fargate container, on both your VPC or a service-managed VPC. These containers then talk again to their assigned Airflow cluster utilizing the Airflow 3 Process API.

Determine 1: Amazon MWAA Structure

MWAA Serverless makes use of declarative YAML configuration recordsdata primarily based on the favored open supply DAG Manufacturing unit format to reinforce safety via job isolation. You may have two choices for creating these workflow definitions:

This declarative method supplies two key advantages. First, since MWAA Serverless reads workflow definitions from YAML it could possibly decide job scheduling with out operating any workflow code. Second, this permits MWAA Serverless to grant execution permissions solely when duties run, reasonably than requiring broad permissions on the workflow degree. The result’s a safer surroundings the place job permissions are exactly scoped and time restricted.

Service issues for MWAA Serverless

MWAA Serverless has the next limitations that it is best to take into account when deciding between serverless and provisioned MWAA deployments:

- Operator assist

- MWAA Serverless solely helps operators from the Amazon Supplier Package deal.

- To execute customized code or scripts, you’ll want to make use of AWS providers, resembling:

- Person interface

- MWAA Serverless operates with out utilizing the Airflow net interface.

- For workflow monitoring and administration, we offer integration with Amazon CloudWatch and AWS CloudTrail.

Working with MWAA Serverless

Full the next stipulations and steps to make use of MWAA Serverless.

Stipulations

Earlier than you start, confirm you’ve the next necessities in place:

- Entry and permissions

- An AWS account

- AWS Command Line Interface (AWS CLI) model 2.31.38 or later put in and configured

- The suitable permissions to create and modify IAM roles and insurance policies, together with the next required IAM permissions:

airflow-serverless:CreateWorkflowairflow-serverless:DeleteWorkflowairflow-serverless:GetTaskInstanceairflow-serverless:GetWorkflowRunairflow-serverless:ListTaskInstancesairflow-serverless:ListWorkflowRunsairflow-serverless:ListWorkflowsairflow-serverless:StartWorkflowRunairflow-serverless:UpdateWorkflowiam:CreateRoleiam:DeleteRoleiam:DeleteRolePolicyiam:GetRoleiam:PutRolePolicyiam:UpdateAssumeRolePolicylogs:CreateLogGrouplogs:CreateLogStreamlogs:PutLogEventsairflow:GetEnvironmentairflow:ListEnvironmentss3:DeleteObjects3:GetObjects3:ListBuckets3:PutObjects3:Sync

- Entry to an Amazon Digital Non-public Cloud (VPC) with web connectivity

- Required AWS providers – Along with MWAA Serverless you’ll need entry to the next AWS providers:

- Amazon MWAA to entry your current Airflow surroundings(s)

- Amazon CloudWatch to view logs

- Amazon S3 for DAG and YAML file administration

- AWS IAM to manage permissions

- Growth surroundings

- Extra necessities

- Primary familiarity with Apache Airflow ideas

- Understanding of YAML syntax

- Data of AWS CLI instructions

Be aware: All through this put up, we use instance values that you simply’ll want to switch with your personal:

- Substitute

amzn-s3-demo-buckettogether with your S3 bucket identify - Substitute

111122223333together with your AWS account quantity - Substitute

us-east-2together with your AWS Area. MWAA Serverless is obtainable in a number of AWS Areas. Examine the Listing of AWS Providers Out there by Area for present availability.

Creating your first serverless workflow

Let’s begin by defining a easy workflow that will get a listing of S3 objects and writes that record to a file in the identical bucket. Create a brand new file referred to as simple_s3_test.yaml with the next content material:

For this workflow to run, you will need to create an Execution position that has permissions to record and write to the above bucket. The position additionally must be assumable from MWAA Serverless. The next CLI instructions create this position and its related coverage:

You then copy your YAML DAG to the identical S3 bucket, and create your workflow primarily based upon the Arn response from the above operate.

The output of the final command returns a WorkflowARN worth, which you then use to run the workflow:

The output returns a RunId worth, which you then use to test the standing of the workflow run that you simply simply executed.

If you should make a change to your YAML, you possibly can copy again to S3 and run the update-workflow command.

Changing Python DAGs to YAML format

AWS has printed a conversion software that makes use of the open-source Airflow DAG processor to serialize Python DAGs into YAML DAG manufacturing facility format. To put in, you run the next:

For instance, create the next DAG and identify it create_s3_objects.py:

Upon getting put in python-to-yaml-dag-converter-mwaa-serverless, you run:

The place the output will finish with:

And ensuing YAML will appear to be:

Be aware that, as a result of the YAML conversion is finished after the DAG parsing, the loop that creates the duties is run first and the ensuing static record of duties is written to the YAML doc with their dependencies.

Migrating an MWAA surroundings’s DAGs to MWAA Serverless

You possibly can reap the benefits of a provisioned MWAA surroundings to develop and check your workflows after which transfer them to serverless to run effectively at scale. Additional, in case your MWAA surroundings is utilizing suitable MWAA Serverless operators, then you possibly can convert the entire surroundings’s DAGs without delay. Step one is to permit MWAA Serverless to imagine the MWAA Execution position through a belief relationship. This can be a one-time operation for every MWAA Execution position, and might be carried out manually within the IAM console or utilizing an AWS CLI command as follows:

Now we are able to loop via every efficiently transformed DAG and create serverless workflows for every.

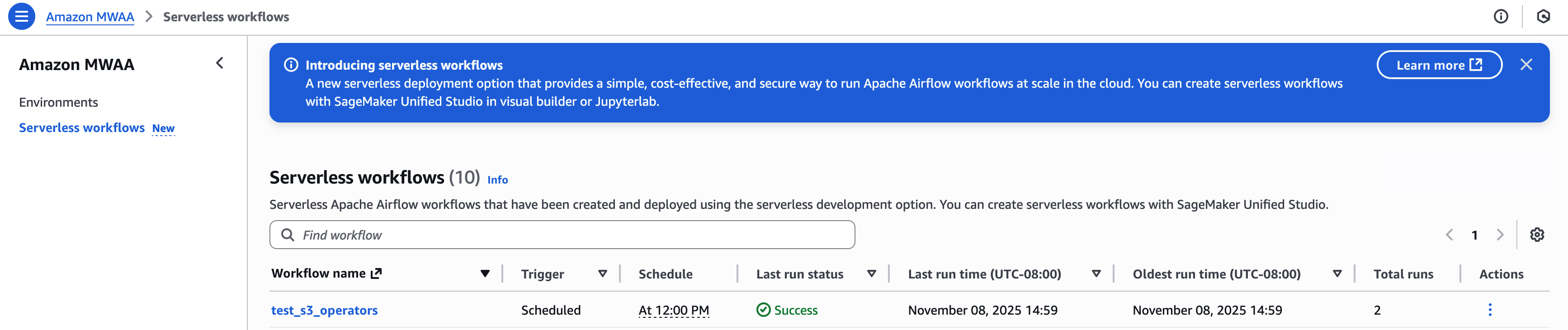

To see a listing of your created workflows, run:

Monitoring and observability

MWAA Serverless workflow execution standing is returned through the GetWorkflowRun operate. The outcomes from that can return particulars for that specific run. If there are errors within the workflow definition, they’re returned beneath RunDetail within the ErrorMessage discipline as within the following instance:

Workflows which can be correctly outlined, however whose duties fail, will return "ErrorMessage": "Workflow execution failed":

MWAA Serverless job logs are saved within the CloudWatch log group /aws/mwaa-serverless/ (the place / is identical string because the distinctive workflow id within the ARN of the workflow). For particular job log streams, you’ll need to record the duties for the workflow run after which get every job’s data. You possibly can mix these operations right into a single CLI command.

Which might end result within the following:

At which level, you’ll use the CloudWatch LogStream output to debug your workflow.

Chances are you’ll view and handle your workflows within the Amazon MWAA Serverless console:

For an instance that creates detailed metrics and monitoring dashboard utilizing AWS Lambda, Amazon CloudWatch, Amazon DynamoDB, and Amazon EventBridge, overview the instance in this GitHub repository.

Clear up sources

To keep away from incurring ongoing expenses, comply with these steps to wash up all sources created throughout this tutorial:

- Delete MWAA Serverless workflows – Run this AWS CLI command to delete all workflows:

- Take away the IAM roles and insurance policies created for this tutorial:

- Take away the YAML workflow definitions out of your S3 bucket:

After finishing these steps, confirm within the AWS Administration Console that every one sources have been correctly eliminated. Keep in mind that CloudWatch Logs are retained by default and should should be deleted individually if you wish to take away all traces of your workflow executions.

Should you encounter any errors throughout cleanup, confirm you’ve the mandatory permissions and that sources exist earlier than making an attempt to delete them. Some sources might have dependencies that require them to be deleted in a particular order.

Conclusion

On this put up, we explored Amazon MWAA Serverless, a brand new deployment possibility that simplifies Apache Airflow workflow administration. We demonstrated the best way to create workflows utilizing YAML definitions, convert current Python DAGs to the serverless format, and monitor your workflows.

MWAA Serverless gives a number of key benefits:

- No provisioning overhead

- Pay-per-use pricing mannequin

- Automated scaling primarily based on workflow calls for

- Enhanced safety via granular IAM permissions

- Simplified workflow definitions utilizing YAML

To be taught extra MWAA Serverless, overview the documentation.

Concerning the authors